Visible Layer vs Operational Layer

Platforms or moderators can genuinely support principles like open science or open access. But in practice, they operate under invisible constraints, volume, reputation, risk management, and moderation load. As a result, they implement filters that are rarely stated explicitly. The discourse remains open, while the system functions through implicit barriers.

Quiet exclusion does not require a system to deny its values. It only requires the operational criteria of exclusion to remain undefined.

A split between discourse and operation

This creates a clear structural split between what the system says and how it actually functions. At the visible layer, the language is openness, access, inclusion, and support for scholarship. At the operational layer, the practical task is sorting, filtering, reducing noise, managing risk, and limiting moderation burden.

Visible layer openness, access, inclusion

Operational layer sorting, filtering, noise reduction

The two layers are not necessarily contradictory in a formal sense. That is precisely what makes the phenomenon difficult to challenge. The public discourse can remain consistent while the operational reality silently narrows who gets processed, recognized, or taken seriously.

Filtering without explicit criteria

The key point is that this filtering is rarely made explicit. Platforms do not usually say, “we do not process this type of submission.” Instead, they produce generic outcomes, often framed in evaluative language, without clarifying what operational threshold was actually triggered.

This matters because ambiguity lowers reputational cost for the institution while increasing friction for the user. The system avoids openly declaring exclusion, but the person receiving the decision is left with nothing precise to correct, contest, or even understand.

The non-actionable rejection

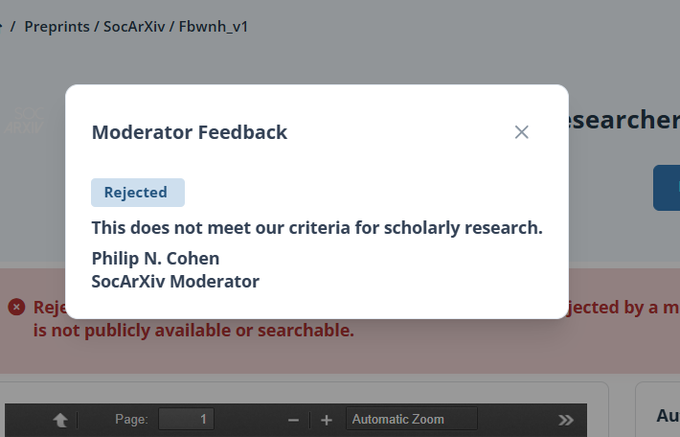

In this case, the message “This does not meet our criteria for scholarly research” is a textbook example of a non-actionable rejection. It appears definitive enough to close the case, but too vague to guide any revision.

It does not indicate whether the issue concerns format, author status, lack of institutional validation, or type of content. Because the reason is not operationally specified, no meaningful correction path is available.

Why this matters for quiet exclusion

What this shows, and what is central to quiet exclusion, is that a system does not need to contradict its stated values in order to exclude. It only needs to leave its actual operating criteria undefined.

That undefined zone is where exclusion becomes most stable. Formally, the institution can still present itself as open. Practically, certain submissions or authors can be filtered out without a clear rule ever being stated in a way that would allow public scrutiny.

This makes quiet exclusion especially hard to contest. The discourse remains coherent, the values remain intact, and yet access is still reduced through soft, distributed, and weakly articulated barriers.

Florian Morin

Author of the Ease Framework and Quiet Exclusion

Florianmorin.com